Over the summer months, ESC Insight’s Ben Robertson has been looking at the voting data, supplied by the EBU, for all the individual judges, the combine jury vote, and the public tele votes from the 2014 Eurovision Song Contest. There is much to learn about our beloved Song Contest in these numbers.

As the new season begins, we look back at the project and draw our own conclusions on a voting/scoring format for Eurovision 2015.

The Work So Far

On the night of the Grand Final of Eurovision 2014 the European Broadcasting Union (EBU) also released online the full breakdown of results in the Eurovision Song Contest 2014, including the rankings of all televoting and from each individual jury member. Over the summer on ESC Insight we have taken the opportunity for the first time to look in depth at the background of Eurovision voting. We started by looking at the differences in the results if we had the different voting patterns over the last ten years (Episode 1), followed by a statistical analysis of the impact of running order on the contest (Episode 2). The relationship between the voting patterns of jury members and their individual demographics were examined (Episode 3) as well as a look at the strength of any collusion between jury members (Episode 4). Finally we manipulated the Eurovision voting data to create some fantasy Eurovision scoreboards and see if we could create alternative situations in the voting (Episode 5).

This final piece will conclude from the findings we have seen in all five of the previous parts of the Voting Insight. The aim at the end is that we will recommend our own voting pattern for the Eurovision Song Contest that will be an improvement to the current methods.

The Key Findings In Brief

Much of the maths behind our conclusions is demonstrated in depth in this article. We make no apology that we go into a reasonable level of statistics with you all; to show the scientific evidence as to why we wish to make alterations. However to make sure the full grasp of what we say comes out, here is the summary: what we find would help make our Eurovision Song Contest one with even more integrity.

– We conclude that many songs suffer directly from an effect of negative voting, and will argue that it is unnatural for jury members to rank all of the songs in order from first to last, and that this actually limits artistic integrity of each competing song.

– We conclude that the impact of running order bias is statistically proven to be significant. This suggests to us that for humans to interfere with this process could lead to potential negativity in the future and any perceived benefits are not worth the potential risks. We believe that the running order should be chosen in a method that is at least more random than it currently is.

– We conclude that tougher limits on the backgrounds of jury members are needed to ensure better balance of the demographics of who sits on a Eurovision jury. We note the difficulties of each jury having a gender balance, an age balance and a professional background balance, and furthermore note that jury voting is infact more statistically random than televoting. To try and reduce this gap we propose an increase in the number of jury members in each country.

– We conclude that the trends between voting jury members in the same country is by far our biggest statistical significance in similar voting, meaning juries that watch the show together are more likely to vote with each other more than any other factor that we have measured. We believe that is it more than ok for the juries to watch the show together, and we actively would encourage discussion about the merits of each song. However we believe extra effort needs to be made so that voting is made secretly so that influence on each other based on the voting is limited.

– We conclude from all this a new voting system is needed for the Eurovision Song Contest. We bear in mind that we believe that Eurovision is not in a crisis, and the voting needs only some subtle tweaks to make it fair and fabulous to all. This is explained and demonstrated in full at the very bottom of this article.

The Emergence Of The Negative Voting Trend

Since 2013, each jury member must vote and give their professional opinion on all the songs in the competition, ranking each of them from first place to last place. Usually any headlines about the split between the televoting and jury scores are minor, and rarely reach national and international print. This year, with the scores made public, some of the scores did reach a wider audience. Both in the UK and Ireland, the televoting favourite song from Poland scored zero, as ‘We Are Slavic’ finished last in both respective jury votes, creating a post-contest scandal which obscured interest in our winner.

Donatan was accused of ‘sexism’ by some jurors who did not get the funny side of the Polish entry (Photo: Anders Putting – EBU)

To explore the impact of negative voting amongst jury members, taking one controversial song from the sample as highlighted in the media is not sufficient. We look back to Episode One of Voting Insight to help us establish a trend. In this part of the analysis, we calculated the mean and standard deviation of each of the songs for their results from the juries and televoting across all the voting countries. The calculation of the standard deviation is of most importance here. The standard deviation measures the spread of the results, the bigger the number the more spread the voting was for that song.

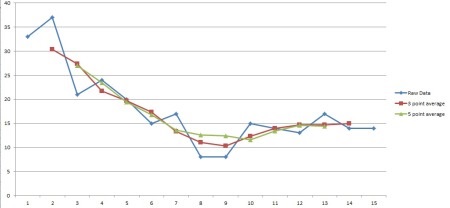

To use this in our analysis we take the three songs with the highest and lowest standard deviations in each Semi Final and the Grand Final. We plot graphs to show the results of each song with each jury member. So if one song received 3 votes in 1st place, that shows as a 3 in the first column.

We also plot 3-point and 5-point average on each of the graphs to show the general trend of voting, as the ranking of a song between 19th place and 20th place is quite an arbitrary difference.

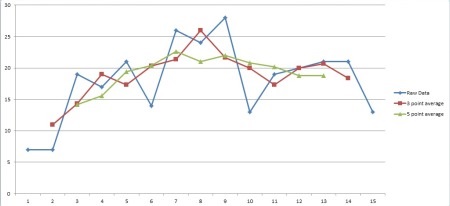

Firstly, please see below the graphs for the three songs which have the lowest standard deviations, so the smallest spread in data, in the semi finals and the Grand Final. Along the side axis is the number of placings in total there were for each position ranked by jury members, and along the bottom is the position that song was placed.

Graph of Semi One Three Lowest Standard Deviation Scores

Graph of Semi Two Three Lowest Standard Deviation Scores

Graph of Grand Final Lowest Three Standard Deviation Scores

All of these graphs roughly show the pattern of the standard normal distribution, a distribution that is roughly 50% spread across either side of the middle. Even though the songs come from different positions in the final results (Ukraine, Albania and Portugal all finished in wildly different places in Semi Final One for example) the overall trend applies. Portugal and France were not victims of statistical negative voting, both were rated poorly by the jury members, but there was no positive ‘kick’ in the data which we do see with the songs with a higher standard deviation.

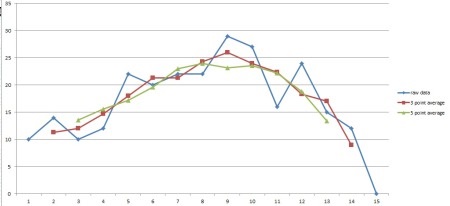

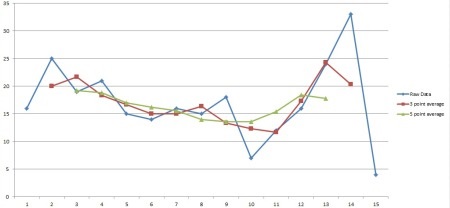

Graph of Jury Placings Given to France in the Eurovision Song Contest Grand Final

When we instead take the songs with the higher standard deviations in the jury voting, we do not see a normal distribution curve centred around the middle. What we instead appear to see is a curve that is bimodal; there are two peaks at either side of the graph. This is our evidence for negative voting. The jury members that liked a certain song thought and gave it a score accordingly to their rating. However here we have songs that alienated some jury members as well and they were voted really lowly as a result. What is different about these results to the ones above is the very low section in the middle, these songs were rarely rated as average, they were either loved or hated.

Graph of Semi One Three Highest Standard Deviation Scores

Graph of Semi Two Three Highest Standard Deviation Scores

For the Semi Finals, we again have songs that have a wide spread of success (ranging from Armenia to Georgia), however each country produces a bimodal shaped graph in their results which is we demonstrate clearly when we combine them as above. The cut off point for this appears to be around a ranking of 10th place.

The votes that are below 10th place appear to rise again, suggesting an increase in the negative voting pattern by jury members who then need to evaluate how much they do not like the entry in question.

We argue that those results below 10th place in each semi final should not be used in future editions of the Song Contest. We do not want to know how bad a song is, only how good a song is. As the 10th song appears to be the split between being ranked ‘good’ and ‘not good’ by these songs that are divisive, we would not wish for juries in the Semi Final to have to rank lower than 10th place.

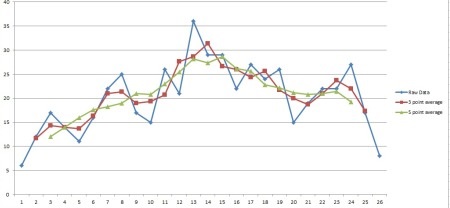

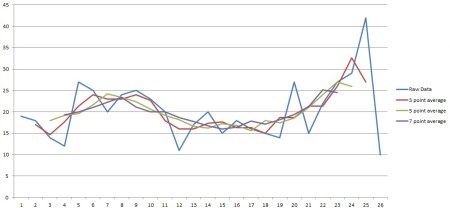

Graph of Grand Final Three Largest Standard Deviation Scores

In the Grand Final, we also see with our three largest standard deviations a bimodal approach to the results. As we would expect with an increase in the number of songs, the position of the apex of this curve is higher than 10th place. The vast spread of the data from 1st place to 26th place means that a 5-point average alone does not show the true position of this trend, which when we add a 7-point average to the graph above seems to indicate a position of around 15th place.

Using our principle from above, we argue then that for these divisive songs most voting is split either to the top 15 places or to the bottom 10 in the Grand Final. Again, our conclusion is that we are only interested in how well liked a song is from jury groups, and not which song they dislike the most. We therefore would recommend that no jury in the Grand Final should be asked to rank no more than their top 15 songs.

Both of these suggestions are taken into consideration for our improved voting system at the end of this article.

The Running Order of the Song Contest

The previous two editions of the Eurovision Song Contest have been conducted with producer-led running orders deciding the order of each song appearing in the contest. What we prove in is that we can measure a statistically significant impact of the running order even on the results of just one contest.

Conchita Wurst is the first winner in eleven years not to sing in the final ten songs of the Grand Final

We also note that the producers were not fully in control of the running order, and songs were drawn into either the first or second half of the draw. What we find is that these halves show a stronger bias to running order than the full show itself. In conclusion we can therefore reaffirm what has been found in other studies that in the efforts to make a running order interesting songs placed later in the contest to keep interest also gain more support from the voters, be they at home or sitting on a jury.

Although the effect is measurable, we are only talking about a few percentage points either way. However, we at ESC Insight still believe that this is significant enough that the running order should be conducted in a 100% random method. Before the contest we were able to plot our ideas about what the the Copenhagen contest. That we were able to say what we did based on the information we found suggested links that frankly distract from the competitive aspect of the songs doing what they should on the night of the Grand Final. That running order could be used by some countries in the future to complain about near-misses in qualifying is ok when luck is involved, but when people have made a judgement and need to stand by it is not a situation we want any delegation or any producer to be a part of.

The perceived benefits of a ‘better show’ created by manipulating the running order are not worth the risks of any fallout, and we would argue that the running order should no longer be producer-led based on the strength of our findings. A tolerance could arguably be given to allow for a producer-led running order that was then started in a random starting position within that running order, should EBU believe variety to the show is a vital component.

Making A Jury That Deserves To Be Fully Accountable

When we analysed the names of the jury members of the Eurovision Song Contest, we were troubled by the vast differences in ‘professional’ background that country thought would be appropriate to sit on a Eurovision jury. The eclectic mix of students, trophy designers and government officials should never have been able to get close to our system which prides itself on being that professional jury with integrity. At ESC Insight we were shocked at how loosely this had been applied when the list was released on May 1st. In absolutely no circumstances should Armenian sculptors, Swedish dancers or Belarussian Culture Ministers be asked to sit on the jury of a Song Contest which currently insists that ‘vocal capacity’ is the top criteria in a song performance. A tighter line and increased guidance is vital for the future.

We also found that the juries did not hold close enough to each other in terms of balance of gender or age. 30 of the 37 juries had a majority of male members and the difference in average ages between the oldest and the youngest jury is almost double. While we would accept to see differences between the each jury member and would not argue for equality, the range of these figures is too high to accept that they are ‘balanced’ as the EBU describes.

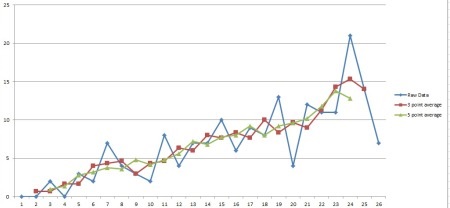

Another factor is about the added value that a jury is meant to provide. Our professional juries we would expect would show an increased strength of opinion and be a more reliable indicator of a song’s worth. However it was an fascinating statistical aside while performing correlation analysis of running order results which showed us that juries are actually quite a random group of people. The votes from individual people correlate less on average to their own votes in the semi final that entire countries of variable televoters. The juries mathematically created more confusion in their opinion than strong certainty in their judgements.

There is nothing wrong with how the individual jury members vote, but the issue is that it is very easy for this noisy data to emerge through the points read out on the Saturday night. To strengthen the robustness of the jury vote so that it is a reliable indicator of a song’s value would require us to increase the jury voting. We at ESC Insight would therefore propose that a jury size doubled to ten people in each country would be a good step to take in order to achieve this.

A Voting System That Will Hold Each Jury Member To Account

All our analysis has been on the hunt for the formula to see where the biggest trends are in jury composition. Are there certain songs that are loved significantly more by younger jury members, or female members, or by those with a different professional background? We do find some trends to this effect and although they are noticeably and newsworthy (for example how ), the gulf in-between these two figures is not large.

By far the biggest correlation between jury members lies between those who voted together in the same room, regardless of any trends based on their varying make up. We know that the jury members watch the show together and we know that they do get the chance to discuss together. It may not be a process that every jury member takes and some are conscious to avoid this (such as Sweden’s Elli Flemstrom) but some influence and learning from each other is going to be a natural and unavoidable part of the process.

What is shocking is that the correlation figures between some jury members were so high. Georgia’s results in the final were televoting only because of the ‘statistically impossible’ correlation spotted, with Azeri, Montenegrin and Belarussian juries all correlating to each other above 0.900, which is an worryingly enormous correlation in tastes.

Nikki gave Armenia, Sweden and The Netherlands her bottom three scores in the Semi Final. Her other jury members didn’t vote much differently.

If there are sinister and backhanded influences on jury members, no magic formula is going to stop it. Watching the show individually could help, but would almost certainly not be worth the extra upheaval to produce. Delaying the deadline for submitting votes too would logistically be difficult for creating the voting running order on the Saturday Grand Final in time to run through this in the Dress Rehearsal.

There is only so much that can be done, however moving the physical points setting away from the viewing could be one help. Juries should feel free to discuss the finer points of songs if they wish and make notes on what worked and didn’t, but getting the chance to actually give out their points on the scoreboard should be done in secret away from the other members. This small step at least separates the viewing from the voting and may remove other people’s direct influence.

Creating The Voting System That Will Give The Best Balance

Despite the flaws that we have laid out in the above paragraphs the Eurovision Song Contest is far from crisis. We are, to use a phrase recently coined in talking about the new Eurovision logo, in need of an ‘evolution, not a revolution.’ Eurovision voting is steeped in tradition and history and more than anything, it works, it excites and it is a part of its identity.

With this in mind, we have to use our heads to get around what we want to say with the mathematics of any voting calculations. For the EBU to suggest a voting system that did not give ‘douze points’ to the winning song would need a incredibly certain mathematical conviction that the current system is a failure. We don’t have that. Similarly 50/50 voting between the juries and televoting is popular amongst many countries and fans and is here to say. Furthermore any changes need to be kept at a simple level. Any twist to the voting system needs to be one that does not add any layers to the confusion of the Song Contest, in which to fully explain the voting even now takes a video filled with fancy graphics.

The biggest stumbling block we believe of the current voting system is the requirement that all the songs are rated from first place to last place by the jury members. The results of this means that it is possible for jury members to complete kill a songs chances which has happened in numerous examples from this year’s contest (Armenia to Austria, the UK to Poland and Belgium to Armenia).

We have seen above that the voting of some songs in the Song Contest is not uniformly spread. Some songs divide opinion and are either loved or hated. That has happened to Eurovision songs in the past and will continue to happen in the future. Our voting system should not overly penalise songs that do not get agreement from jury members.

Average: Tinkara got the middling mean score of 15.03 from juries in the Grand Final with the lowest standard deviation of all twenty-six songs

We notice for songs that scored a high standard deviation of jury voting in the Semi Finals that an upward ‘kick’ in the voting scores begins roughly from about 10th place, and in the Grand Final the kick is slightly later from around 15th place. This we argue is the split from where voting is positive (as in it is liked) to one where jury members are deciding how bad a song is (how much do I dislike it above the others).

This is wrong. Eurovision is not a contest where people rate what they don’t like, but give points to what they do like. The working out of how bad a song is not natural and is not helpful when judging pieces of art such as Eurovision performances. We therefore conclude that each jury member should not cast their vote for every song from first place to last place.

Although mathematically speaking the increased number of songs that take part in the Grand Final suggests we would be correct to increase the range of songs that are voted for by jury members in the Saturday night show. However to change the voting system between the three shows would not fit true with our wish for a simple-to-understand voting procedure. Therefore we would propose the smaller of the numbers found above and we would ask jury members to cast their votes for their top ten songs only in each show. To fit in more with the rest of the contest, we would ask for these votes to be cast with 12 points to their favourite, 10 points to their second favourite, followed by 8, 7, 6, 5, 4, 3, 2 and finally 1 point to their tenth favourite entry of the evening.

Eurovision buffs now may believe that what we are suggesting is a return to the voting system Eurovision had between 2010 and 2012. In this system each jury member voted 1-12, this was combined to make a new 1-12 of total jury placings, and then that added to the televote 1-12 to make the combined 1-12. The flaw with this system is that it made it quite easy for a song with zero televote support at all to get a high rating. Voting was not fully public in this era, but we know the United Kingdom gave out 10 points to Switzerland in 2011 and 8 points to Spain in 2012 purely based on their first place score with the jury.

What we propose is a subtle twist to counter this. If all on a jury fully agrees that a song is a stand out best, then absolutely it should do well. However if that jury does not reach such consensus, and the votes are scattered across songs with no clear favourite, the televoting should be able to lead sway.

Our proposal would be to take a leaf from how Melodifestivalen runs 50/50 voting.

What Sweden Has And Why Sweden Is The Most Fair

In the final of Melodifestivalen there are a number of juries that vote individually. These can then disagree with each other or agree strongly. For example in 2009 Malena Ernman was able to win Melodifestivalen despite ending up 8th place with juries. The juries that year did not particularly agree with each other, so the points gap between 8th and 1st was reasonably low and Malena was able to overhaul the others with the top televote and take the victory.

Similarly last year Robin Stjernberg was a clear favourite with high points from almost all juries. So much so that even a huge televote towards Yohio was not enough to catch up.

We believe that this system creates a 50/50 that is fit to purpose. It differentiates the strength of how much a song is a ‘jury favourite’ so the jury voting is not just one long ranking, but one that shows more representatively the opinions of jury members. With a group of five people having the responsibility to cast half of the votes of a country, we believe this is a more fair system.

Also, we believe this method is arguably simpler to explain. Each jury member would rate their ten favourite songs from 1 point to 12 points. These points are then added together to create the total number of points from the five juror members. The televote also rates the top ten songs, but as there is only one televote for five jury members, this is multiplied by five (so 12*5=60 points go to the top song with the televote). The televote score is then added to the jury score and the song with the highest combined total gets 12 points, second gets 10 points and so on. The conversation into a ‘rank’ only at the end of the calculation, rather than twice as in the 2010-2012 system which reduces the difficulty in calculation.

This difference to voting is not groundbreaking. When we calculate the results of the 2014 Eurovision Song Contest with this method, we find only one of the twenty qualifying songs would change (Portugal qualifies in place of San Marino), and our winner is even more clear cut than before. However even with this subtle change, every country in our simulation gave out different points than before. There are examples scattered through where songs with televote support in a country but not from juries shot up, but also examples of songs with clear jury agreement on quality also scored much higher.

Results in our New System with Semi Final One

| Country | Placing | Points |

| The Netherlands | 134 | 1st |

| Sweden | 132 | 2nd |

| Armenia | 128 | 3rd |

| Hungary | 118 | 4th |

| Ukraine | 110 | 5th |

| Azerbaijan | 66 | 6th |

| Russia | 64 | 7th |

| Iceland | 60 | 8th |

| Montenegro | 60 | 9th |

| Portugal | 56 | 10th |

| San Marino | 42 | 11th |

| Estonia | 32 | 13th |

| Belgium | 32 | 12th |

| Latvia | 30 | 14th |

| Albania | 23 | 15th |

| Moldova | 15 | 16th |

Results in our New System for Semi Final Two

| Country | Points | Placing |

| Austria | 175 | 1st |

| Romania | 122 | 2nd |

| Poland | 90 | 3rd |

| Finland | 88 | 4th |

| Switzerland | 83 | 5th |

| Belarus | 81 | 6th |

| Greece | 75 | 7th |

| Norway | 74 | 8th |

| Malta | 59 | 9th |

| Slovenia | 48 | 10th |

| Macedonia | 43 | 11th |

| Lithuania | 38 | 12th |

| Ireland | 30 | 13th |

| Israel | 21 | 14th |

| Georgia | 17 | 15th |

Results in our New System for the Grand Final

| Country | Points | Placing |

| Austria | 1st | 308 |

| The Netherlands | 2nd | 232 |

| Sweden | 3rd | 211 |

| Armenia | 4th | 190 |

| Hungary | 5th | 127 |

| Poland | 6th | 110 |

| Russia | 7th | 107 |

| Romania | 8th | 92 |

| Ukraine | 9th | 90 |

| Finland | 10th | 67 |

| Switzerland | 11th | 65 |

| Norway | 12th | 62 |

| Denmark | 13th | 62 |

| Malta | 14th | 49 |

| Azerbaijan | 15th | 49 |

| Belarus | 16th | 49 |

| Iceland | 17th | 45 |

| Spain | 18th | 45 |

| Montenegro | 19th | 40 |

| Germany | 20th | 40 |

| Greece | 21st | 30 |

| Italy | 22nd | 29 |

| United Kingdom | 23rd | 29 |

| Slovenia | 24th | 10 |

| San Marino | 25th | 7 |

| France | 26th | 1 |

Why All Of This Is Important

Each different edition of Voting Insight over the summer months has taken us towards the conclusions we reach today. The pouring over Excel spreadsheets and brushing up of statistical techniques has all been about trying to answer the question of what is really behind our Eurovision voting, and how can we make it better.

We now are in a position where we can give recommendations to the EBU based to the statistics we have completed. A new voting calculation is needed, our juries should be made larger and the running order is a significant enough factor that it should be removed from human influence.

2014 has been the first year any of this work has been at all possible. This is the first chance that work on such statistics as the EBU for the first time ever has made each jury ranking and televote ranking public knowledge. In later years of course it would be essential to investigate with the increased data package if the same findings we see here can be seen in different contests.

However the great step towards openness and transparency that is symbolised by releasing these points should also make sure the EBU continue to work towards fine-tuning and perfecting how our beloved Song Contest works. What we believe is that the numbers of our reports over the summer give a positive direction for the Eurovision Song Contest to work towards and make small steps for a more fabulous future..

We have done all we can and we wish for no revolution. The evolution though must take place from those in the hands of power.

Looks good to me.

Although rankings are more convenient for mathematicians, points give a more intuitive grasp of what matters to viewers. Dealing only in points explicitly, with ranking merely implied avoids the “which way is up’ hurdle.

Of course, it does mean getting less data for future research. But since much of what’s lost is noise, keeping it out of the results is more important. And a system that gives both would probably break simplicity.